Quick Verdict

BerriAI-litellm excels at providing a unified API format that lets developers call any language model using OpenAI’s familiar structure, eliminating the need to learn different APIs for each model. Its free pricing makes it accessible for experimentation, but it lacks built-in enterprise features like advanced monitoring or team collaboration tools. This tool is best for developers and researchers who need to test multiple LLMs through a consistent interface without budget constraints.

BerriAI-litellm – AI Language Model Integration

- Category: AI Chatbots, Education, Research

- Pricing: Free

- Best for: Developers integrating multiple AI models

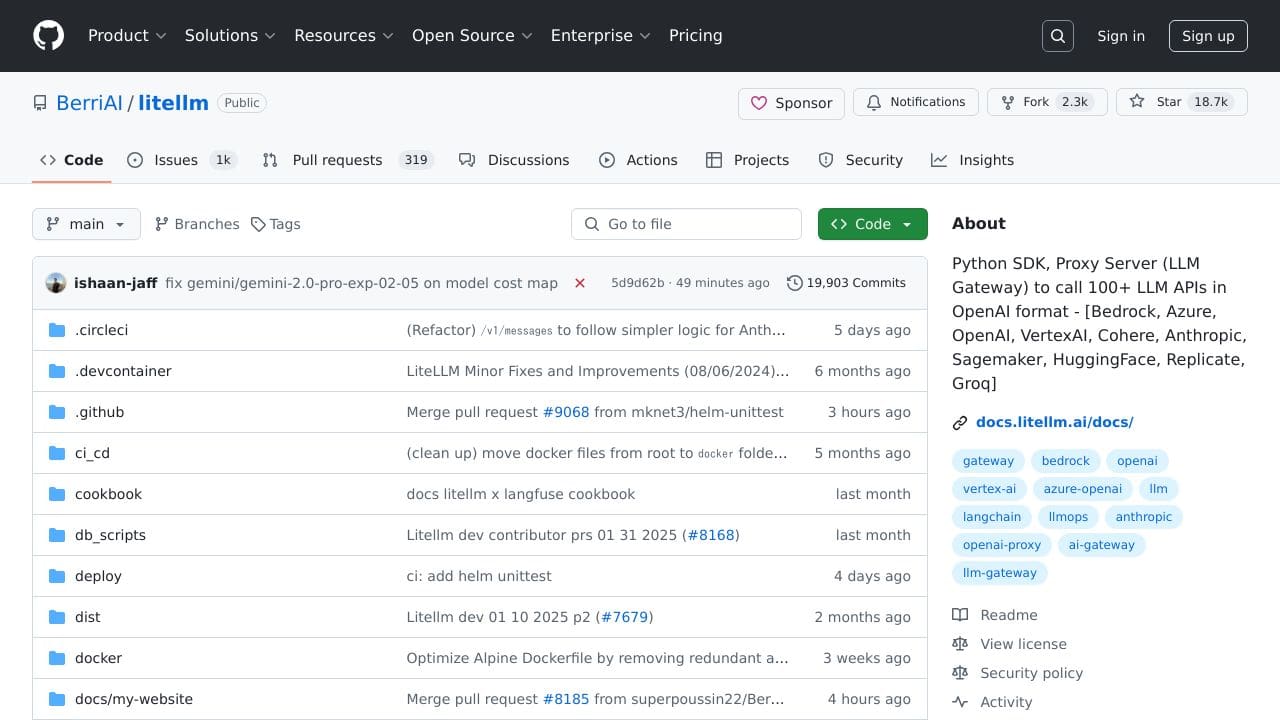

Background Check on BerriAI-litellm – AI Language Model Integration

We ran a background check on github.com to verify its safety, security posture, hosting infrastructure, and web history. Here are the results as of April 18, 2026.

✓ Content Security Policy (CSP), Cookies, Cross Origin Resource Sharing (CORS), Redirection, Referrer Policy, Strict Transport Security (HSTS)

✗ Subresource Integrity

Source: Mozilla Observatory report

What is LiteLLM?

LiteLLMis a powerful Python SDK and Proxy Server designed to simplify the process of calling over 100 large language model (LLM) APIs using the OpenAI format. Whether you’re working with Bedrock, Azure, OpenAI, VertexAI, or other providers like HuggingFace and Cohere, LiteLLM acts as a universal gateway, making it easier to integrate and manage multiple LLMs without the headache of dealing with different API formats. Think of it as a universal remote for AI models – no more juggling multiple remotes; just one tool to rule them all.

LiteLLM Features

- Unified API Format: Call any LLM API using the OpenAI format, ensuring consistency across different models.

- Streaming Support: Get real-time responses with streaming capabilities for all supported models.

- Retry and Fallback Logic: Automatically retries fAIled requests and falls back to alternative models for reliability.

- Budget and Rate Limiting: Set budgets and rate limits per project, API key, or model to control costs and usage.

- Proxy Server: Host your own LLM gateway with features like key management, load balancing, and cost tracking.

- Observability: Integrate with tools like Lunary, MLflow, and Slack for monitoring and logging.

- Enterprise Support: Advanced features for companies needing better security, user management, and professional support.

LiteLLM Usecases

- Developers: Use LiteLLM to integrate multiple LLMs into your applications without worrying about API compatibility. For example, you can build a chatbot that switches between OpenAI and HuggingFace models based on user needs.

- AI Researchers: Experiment with different models from various providers using a single interface. Need to compare GPT-4 with Anthropic’s Claude? LiteLLM makes it smooth.

- Enterprises: Manage large-scale AI deployments with features like load balancing and cost tracking. Imagine running a customer support system powered by multiple LLMs without breaking the bank.

- Data Scientists:Access diverse models for tasks like sentiment analysis, text summarization, or language translation. For instance, use Cohere for summarization and OpenAI for sentiment analysis – all through LiteLLM.

- Startups: Quickly prototype AI-powered solutions without getting bogged down by API complexities. LiteLLM is your shortcut to innovation.

How BerriAI-litellm – AI Language Model Integration Compares to Alternatives

When choosing AI chatbot integration tools, key factors include API consistency, model variety, and cost structure. BerriAI-litellm stands out for developers who prioritize standardization across different language models while keeping costs at zero.

| Tool | Best For | Pricing |

|---|---|---|

| BerriAI-litellm | Developers needing a unified OpenAI format to call any LLM API with streaming support | Free, no cost for core features |

| Hawil AI | Businesses requiring AI native CRM with integrated voice agents and chat functionality | Paid, enterprise focused pricing |

| Amallo | Users who want direct chat access to multiple top tier AI models through one interface | Paid, subscription model |

| CometAPI | Teams needing access to 500+ AI models through a single comprehensive API gateway | Paid, volume based pricing |

Best For

- Developers building applications that need to switch between different LLM providers

- Researchers comparing performance across multiple language model APIs

- Startups prototyping AI features without budget for API standardization tools

- Technical teams implementing real time streaming responses from various models

Not Ideal For

- Non technical users wanting ready to use chatbot interfaces without coding

- Enterprises needing built in compliance, monitoring, and team management features

- Projects requiring extensive pre built templates or drag and drop chatbot builders

Getting Started

Begin by installing the litellm package and configuring your first model endpoint using the OpenAI format. Test with a simple completion call to verify connectivity, then explore the streaming feature for real time responses. The documentation provides specific examples for different model providers.

Key Limitations to Consider

- No built in user interface or dashboard for non technical team members

- Limited to API integration without pre built chatbot templates or design tools

- Lacks enterprise features like usage analytics, team permissions, or billing management

- Requires technical knowledge to set up and maintain compared to no code alternatives

- Free model means potential limitations on support and guaranteed uptime

Related Workflows and Tool Pairings

BerriAI-litellm serves as the middleware layer in AI application development, sitting between your application code and various language model providers. Developers typically use it alongside frontend frameworks like React or Vue to build chat interfaces, and backend services for user authentication and data persistence. For complete solutions, pair it with monitoring tools to track API performance and cost management platforms to optimize spending across different model providers. The tool integrates well with existing CI/CD pipelines, allowing teams to test different models during development without changing their core application code.

Related tools to explore: 123GPT.AI – Intelligent Support Chatbots, AI Charfriend – NSFW Chatbot Platform, AI LMS by Coursebox – AI Education Course Creator, AI Yacht Chat – Yachting Industry Assistant, AI.LS – Enhanced Chatbot Interface, AI21 Studio – AI Professional Headshot Creation, AI Chatbots tools, Education tools

Conclusion

LiteLLMis a strong option for anyone working with large language models. Its ability to unify over 100 LLM APIs under one roof, combined with features like streaming, retry logic, and cost management, makes it an indispensable tool for developers, researchers, and enterprises alike. Whether you’re building the next big AI application or just experimenting with models, LiteLLM simplifies the process, saving you time and effort. It’s not just a tool – it’s your AI gateway to the future.

Pricing

BerriAI-litellm – AI Language Model Integration is afree AI ai chatbots tool. No payment required to get started.

Frequently Asked Questions

What is BerriAI-litellm – AI Language Model Integration?

LiteLLM is a powerful Python SDK and Proxy Server designed to simplify the process of calling over 100 large language model (LLM) APIs using the OpenAI format. Whether you’re working with Bedrock, Azure, OpenAI, VertexAI, or other providers.

Is BerriAI-litellm – AI Language Model Integration free?

Yes, BerriAI-litellm – AI Language Model Integration is completely free to use.

What are the best BerriAI-litellm – AI Language Model Integration alternatives?

There are many AI ai chatbots tools available. Browse our AI AI Chatbots tools directory to compare features, pricing, and reviews for the best alternatives.

Last verified: April 2026

Explore more: Browse all AI AI Chatbots tools | Browse all AI Education tools